HappyHorse 1.0 Explored: A New Era of AI Video or the Return of a Hidden Giant?

he AI video landscape in 2026 is currently undergoing a massive shift. The industry is still reeling from the shocking news that OpenAI is shutting down Sora to focus on agentic research. Right as this happened, a mysterious new contender galloped to the very top of the charts.

HappyHorse 1.0 appeared almost overnight on the Artificial Analysis Video Arena. It snatched the #1 spot with a score that leaves the old giants in the dust. Since there is no public team and rumors of a "white-label" origin are everywhere, one big question remains. Is HappyHorse the savior for creators left stranded by Sora, or is it a rebranded titan known as Z-Video?

What is HappyHorse AI? Breaking Down the 1.0 Release

HappyHorse 1.0 is a next-generation AI video model that uses a unified 40-layer Transformer architecture. Older models usually stitched together separate video and audio parts, but HappyHorse is different. It processes text, images, video, and audio tokens all in a single shared sequence.

The Pedigree: Who is Behind the Horse?

After weeks of intense speculation, the industry finally has an answer. HappyHorse 1.0 is the brainchild of Alibaba’s ATH (Alibaba Token Hub) division. The project is reportedly led by Zhang Di, the former Vice President of Kuaishou and the technical architect behind the legendary Kling model. This explains the model's uncanny ability to handle complex human movements and cinematic lighting—it inherits the elite DNA of the world’s most advanced video AI. By launching under the "HappyHorse" pseudonym, Alibaba successfully bypassed corporate bias, letting the raw power of the 15B parameter model speak for itself on the global leaderboards.

The Hugging Face Debut: Technical Capabilities

The official weights on Hugging Face are currently behind a "coming soon" placeholder, but the leaked technical specs are truly staggering. The model has about 15 billion parameters and supports native audio in seven different languages, including English, Chinese, and French. Its main strength is the ability to create 1080p high-definition video with perfect lip-syncing in just one single pass.

Physics, Fluidity, and Motion: Why Creators are Excited

HappyHorse has achieved what many call the "Sora-level" of physical consistency. It handles complex movements like water splashing against a glass or the detailed folding of fabric with ease. This level of realism moves past the "uncanny valley" and feels completely natural. For creators who are mourning the loss of Sora, HappyHorse is a new beacon of hope for high-fidelity storytelling.

A New Titan: Can HappyHorse Rival Seedance 2.0?

With the sun setting on Sora in late 2026, the throne for the world’s "Mainstream Video Model" is up for grabs. While ByteDance’s Seedance 2.0 has been the industry benchmark for commercial speed and social integration, HappyHorse 1.0 has officially entered the ring as its head-to-head rival.

The emergence of HappyHorse represents more than just a new tool; it is a shift toward open-weight power that challenges the closed ecosystems of the past. To understand how these giants stack up against each other in the current April 2026 landscape, we have broken down their core capabilities:

| Feature | HappyHorse 1.0 (Alibaba) | Seedance 2.0 (ByteDance) | Sora (OpenAI) |

| Access Model | Open Weights / API | Closed (Web & API) | Discontinued (2026) |

| Architecture | 40-Layer Unified Transformer | Dual-Branch Diffusion | Spatiotemporal Patches |

| Audio Support | Native 7-Language Lip-Sync | 8+ Languages / Dubbing | Environmental SFX only |

| Physics | Extreme (Cinematic Logic) | High (Dynamic/Social) | High (Research-grade) |

| Best Use Case | High-Fidelity Storytelling | Viral Content / Ads | N/A |

The Controversy: Is HappyHorse Actually Z-Video in Disguise?

Even with the links to Alibaba's ATH division becoming clearer, the "AI detectives" on the internet are still digging deeper.

Reddit’s Investigation: Analyzing the Weights and Output

On platforms like r/StableDiffusion, many users noticed that the output style of HappyHorse looks exactly like Z-Video. Z-Video is a legendary internal model rumored to be built by Alibaba’s Taotian Group. Technical experts suggest that the name "HappyHorse" is just a pseudonym used while the tool moves from a private enterprise project to a public powerhouse.

The "White-Label" Trend in AI Model Development

We are entering a new era of "White-Label AI." Massive tech companies are now releasing models under fake names to see how the community reacts without risking their main brand reputation. If HappyHorse is indeed a rebranded Z-Video, it is a massive win for the open-weights community. It provides a level of power that used to be locked behind the closed doors of Big Tech.

Why Model "Lineage" Matters for Commercial Creators

While understanding the engine behind the video is vital for safety, the ultimate goal for most remains the same: conversion. This is where the gap between cinematic art and commercial reality appears. For a professional creator, knowing where a model comes from is more than just trivia. It is about your commercial safety and peace of mind.

1.

Licensing: If HappyHorse is an unofficial leak of Z-Video, using it for high-stakes ads could lead to copyright problems later.

2.

Reliability: Rebranded models sometimes lack the long-term API support that established platforms provide for their users.

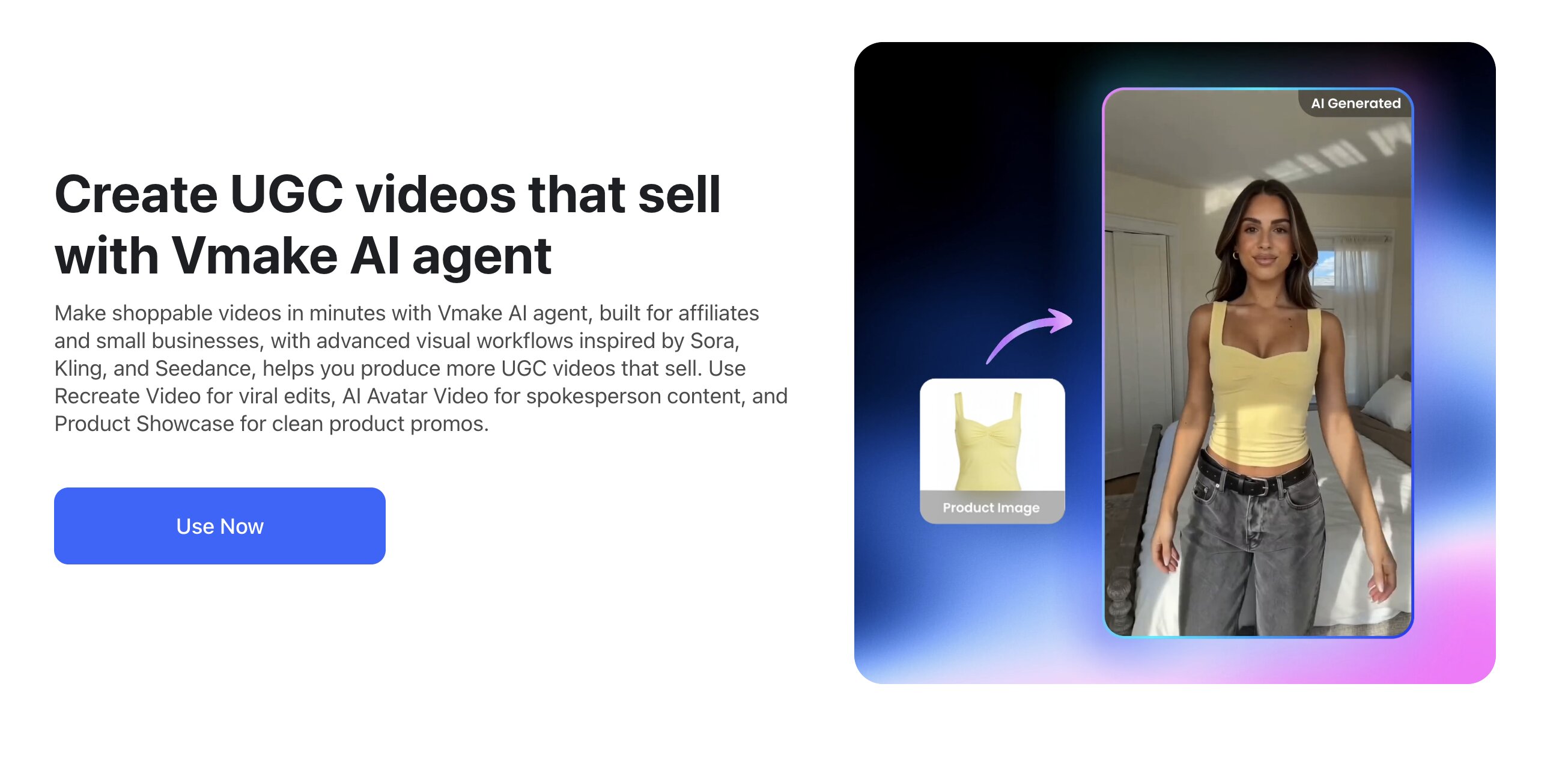

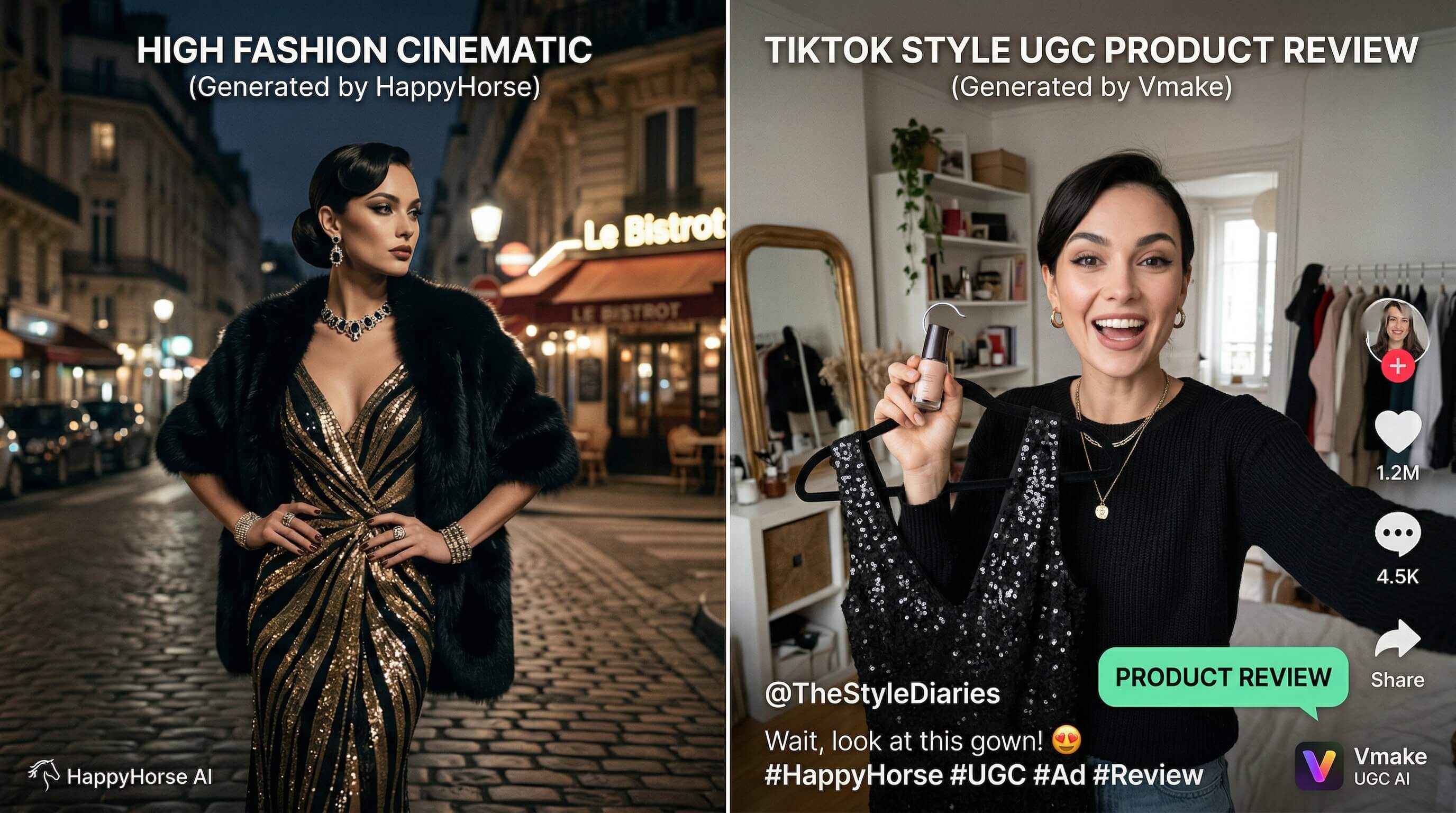

Beyond Realistic Vistas: Generating Shoppable UGC with Vmake

While HappyHorse 1.0 is the king of cinematic views and movie-like physics, it is not always the fastest path to making a sale. High-art AI video can sometimes look too perfect. It can lose that "authentic" touch that modern shoppers really crave.

Why HappyHorse and Vmake Serve Different Needs

- HappyHorse 1.0: This is best for "Wow Factor" visuals, world-building, and high-budget atmospheric scenes.

- Vmake: This is the ultimate tool for shoppable UGC video. Vmake focuses on the "User-Generated Content" look. It makes videos look like they were filmed by a real person on a smartphone. This style is proven to convert 3 times better in the world of e-commerce.

Turning Cinematic AI Video into High-Conversion Ads

The best strategy in 2026 is a hybrid one. You can use HappyHorse to generate a breathtaking cinematic background and then use Vmake UGC Video Generator to insert your product. Vmake helps you create a relatable and shoppable overlay. This combination gives you the visual prestige of a top-tier model along with the conversion power of a dedicated marketing tool.

Practical Tips for Using HappyHorse 1.0 in Your Workflow

- Exploit the Audio-Visual Link: Since HappyHorse creates audio and video at the same time, use prompts that describe specific sounds. Mention the crunch of dry leaves under boots to see how well the model aligns sound with motion.

- Monitor the 'Coming Soon' Pages: Keep a very close watch on the official Hugging Face links. The moment those weights drop, the "first-mover" advantage for local fine-tuning will be huge.

- Cross-Platform Rendering: Use HappyHorse for the complex physics scenes that other models fail to do. Then, stitch those scenes into your Vmake workflow for final distribution and sales.

FAQ: Everything You Need to Know About HappyHorse

Q: Is HappyHorse a replacement for Sora?

A: With OpenAI's Sora shutting down its app and API in late 2026, HappyHorse 1.0 is currently the highest-ranked alternative on the market. It offers similar physics but within a much more open ecosystem.

Q: Does HappyHorse 1.0 support multi-language lip-sync?

A: Yes. It natively supports 7 languages including English, Japanese, and German. This makes it a powerful tool for global marketing.

Q: Where can I try HappyHorse for free?

A: Some platforms like Dzine have already put HappyHorse 1.0 into their interface. This allows users to test the model before the official weights are released on Hugging Face.

Conclusion: The Future of Transparent AI Video

The rise of HappyHorse 1.0 marks a major turning point in the AI world. Between the Z-Video rumors and the end of Sora, we are moving away from centralized platforms. The future is moving toward a more specialized and powerful landscape.

Whether you choose the cinematic heights of HappyHorse or the conversion-driven efficiency of Vmake, one thing is clear. The horse has left the barn and the future of AI video is faster and more realistic than we ever imagined. Ready to turn AI power into actual sales? While you wait for the HappyHorse weights, start creating high-converting shoppable videos with Vmake UGC Video Generator today.

You May Be Interested

5 Tools to Create Eye-Catching Visual Hooks for Short Videos

Best AI Video Enhancers in 2025: Top 6 AI Video Tools Reviewed

Best 7 Online Video Editors in 2026: Free & No-Watermark Options Compared

Best AI Video Editing Tools for Professional Real Estate Videography